Air France 447 and Deepwater Horizon: Considerations For Team Readiness

In the dark, early morning hours of June 1, 2009, Air France Flight 447, en route to Paris over the Atlantic Ocean, lost some of its instrumentation while attempting to dodge a thunderstorm. The flight crew became confused and lost control of the aircraft. The aircraft pitched upward and stalled. Unable to recover, the Airbus A330 fell 38,000 feet, hitting the water at 124 miles per hour, and killing all 228 people on board.

Less than one year later, on the night of April 20, 2010, an explosion occurred on the Deepwater Horizon oil drilling rig 49 miles off the coast of Louisiana. 11 people were killed and many were injured. Oil from the well gushed uncontrollably 5000 feet below the water’s surface. Before being capped on July 15th, more than 4 million barrels of oil flowed into the Gulf of Mexico. The oil destroyed wildlife and wreaked havoc on the people, landscapes, and businesses of the gulf coast area.

Two horrific disasters. Each involving a highly skilled team of people working together to perform complex job operations. Each team using sophisticated equipment and technology, with little margin for error. These events are disturbing, not just because they involved so much death, horror, and destruction, but because they happened. Transatlantic commercial airline flying and deepwater oil drilling are not new endeavors. We might be more understanding if these tragedies occurred a hundred years ago, in the early days of flying and offshore drilling. Instead, they happened in the 21st century, far down the experience curve, with so much proven technology, good operational management know-how, and training capability at our disposal. Why?

These incidents should stir team members and team leaders to action. Not just aviation and oil industries teams, but all teams should examine these incidents and ask, “What lessons can my team learn from these incidents? What can we do to make sure that our team does not fall victim to similar circumstances?”

Following the summaries of the incident reports shown below, we look at considerations for assessing team readiness to help prevent similar incidents from happening.

TeamReadiness

A team achieves readiness when each individual member can do his or her job — on time, safely, according to specification, within budget, and as expected. Achieving team readiness means that jobs get done in the proper way and with the desired results. Team members must have the proper know-how and ability. They must display the appropriate behavior in the course of performing their duties. What do the AF 447 and Deepwater incidents reveal about your team’s level of readiness? What can each of us learn from these tragedies that we can apply to our own teams to prevent problems and improve readiness capability?

1. Collaboration.

- Both AF 447 and Deepwater Horizon Reports indicate team members had problems working together to perform their duties and that the lack of effective communication and involvement among team members contributed to the accidents.

- There appears to be problems with team work both internal to some of the companies on Deepwater and also involving external team members (e.g., general contractor not collaborating with sub-contractors).

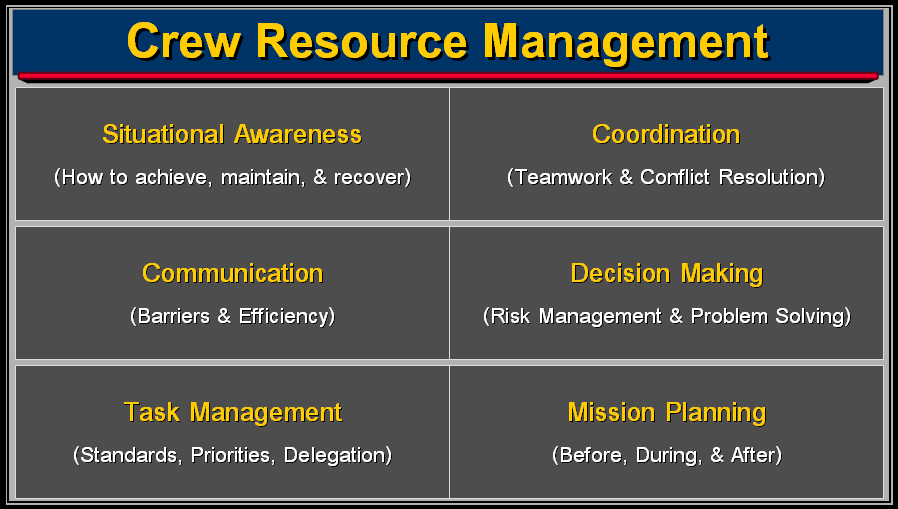

- The AF 447 Report sited a lack of operational instructions for the copilots, no Crew Resource Management (CRM) training for the circumstances, and an absence of a hierarchy and of effective task-sharing in the cockpit strongly contributed to the low level of synergy.

- The Deepwater Report sites “the overarching failure of management” and the need for “improving the ability of individuals involved to identify the risks they faced, and to properly evaluate, communicate, and address them.”

- Teams should consider having Mission-Specific Crew Resource Management systems in place for all personnel on a project (General Contractor and Sub-contractor team members).

- Crew Resource Management systems are intended to foster collaboration, cooperation, and synergy among team members. The goal is obtain optimal levels of communication, problem solving, and decision making among team members so they can accomplish their mission safely, successfully, and as expected.

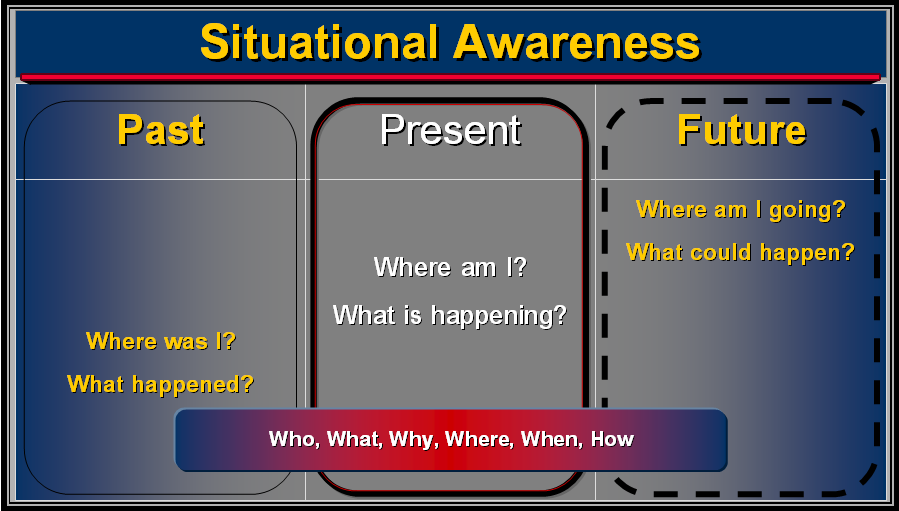

- Situational Awareness is a key component of Crew Resource Management. It requires having the correct understanding of what is going on in a given environment, time, and space so that the appropriate action can be taken.

- Situational Awareness requires that people have access to necessary information (past and present). It requires that they interpret that information to control, influence, predict, or respond appropriately to what is happening. It Also requires they make an assessment of what is likely to happen in the future, and that they especially consider the risks of what could go wrong.

- It appears that both Air France 447 and Deepwater Horizon teams were not fully aware of the situation they were in (e.g., airplane stall, cement job reliability, kick detection, etc.). Therefore, team members did not take the appropriate actions to prevent the disasters.

- Teams should consider their ability to have Situational Awareness in each step of the jobs they have been charged with executing. They must deal with the potential risks, hazards, and incidents in which they may find themselves.

3. Indicators & Instrumentation.

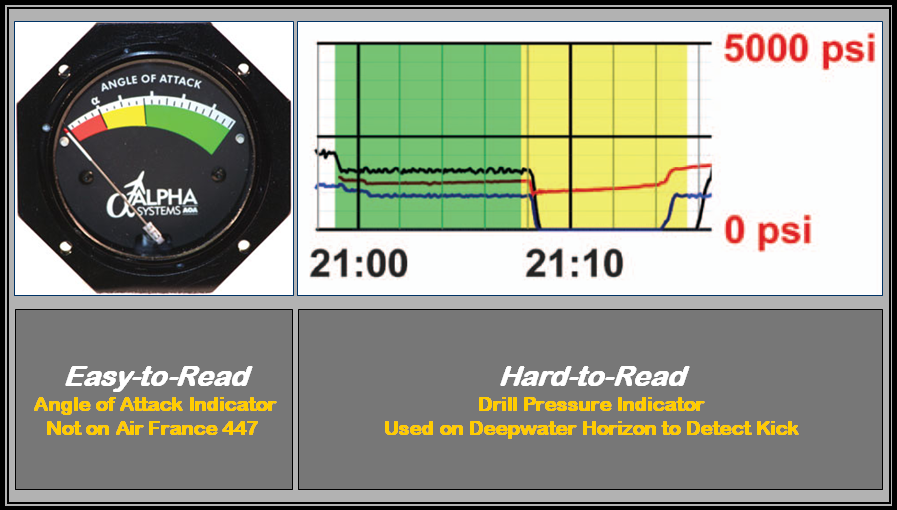

- To achieve situational awareness, team members need indicators and instruments that provide them with good information so they can make the appropriate decisions and take appropriate action at the appropriate time. They need indicators that are easy-to-read, understand, and interpret; that are reliable and work in all operating conditions and environments; and are helpful in managing all likely situations.

- Team members should have an instrument panel with the indicators they need for each job, mission, or situation they may encounter. What does your instrument panel look like?

- For a given job or situation do team members have:

- The information they need?

- Is the information easy-to-access and read?

- Is the information easy-to-understand and interpret?

- Is the information reliable and available for all conditions (e.g., AF 447 pitot tube froze)?

- To protect against failure or ensure reliability, do you need redundant, backup, or alternative indicators?

- It appears that both Air France 447 and Deepwater Horizon teams were not fully aware of the situation they were in (e.g., airplane stall, cement job reliability, kick detection, etc.). Situational Awareness was compromised because of indicator and instrumentation problems. Both teams either did not have indicators that might have helped (e.g., angle of attack indicator), and/or indicators that failed (e.g., airspeed indicators), and/or indicators that were confusing to read, understand, and warn of trouble (e.g., kick detector).

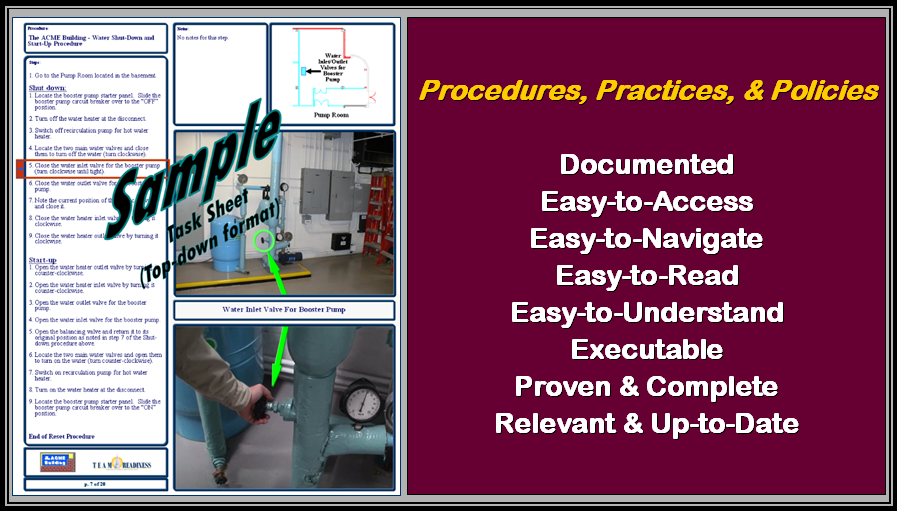

4. Procedures, Practices, & Policies.

- There were problems with procedures on both AF 447 and Deepwater Horizon. In key situations, procedures did not exist, were not used, or were not helpful.

- Teams should consider the procedures, best practices, lessons learned and policies they need to be effective, at each step in their job process or for the incidents or situations they may encounter.

- Consider the comment from the Deepwater Horizon investigation, that the safety manual was “unstructured,” “hard to navigate,” and “not written with the end user in mind”; and that there is “poor distinction between what is required and how this should be achieved.” As the graphic above illustrates, these types of issues need to be addressed when creating documentation.

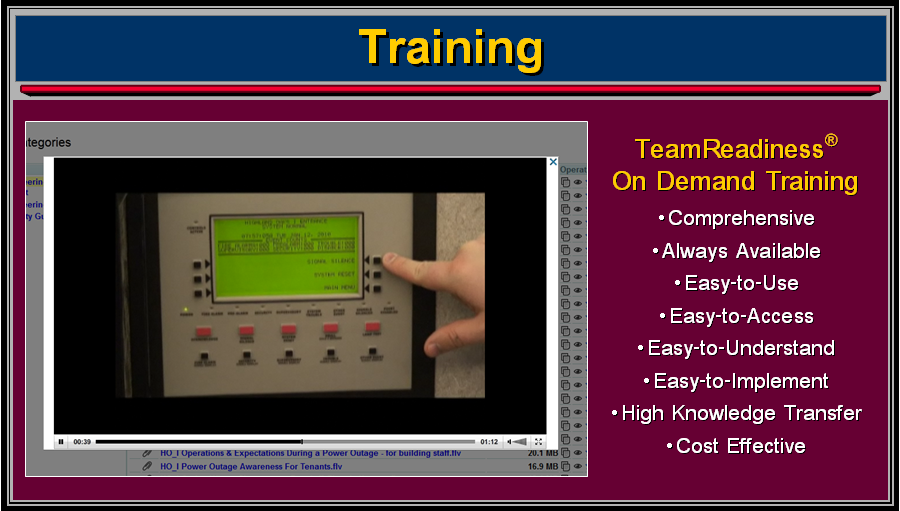

- In the case of AF 447, the copilots had not received any training, at high altitude, in the “Unreliable IAS [Indicated Air Speed]” procedure and manual aircraft handling. Similarly, there were issues with task-sharing and Crew Resource Management elements. These things appear to have significantly contributed to the crews ability to recover from the stall and avoid the disaster. Self-learning training modules were among the items recommended by the investigating committee.

- On Deepwater Horizon, team members were not trained on how to conduct the negative-pressure test or adequately trained on how to respond to mud spewing from the rig floor. Training may have helped to avoid or lesson the impact of the explosion. There were also many deficiencies in management systems, team work, risk assessment, leadership, and decision making.

- Consider your training needs and the reasons that training is not done on a particular issue in your team. Good training programs do not have to be cost prohibitive, difficult to implement or lacking in scope. Give your team the training it needs.

Let TeamReadiness help. We can assist with the entire process to quickly and cost effectively help team members grow their capabilities and achieve team readiness!

|

|---|

DMW